About

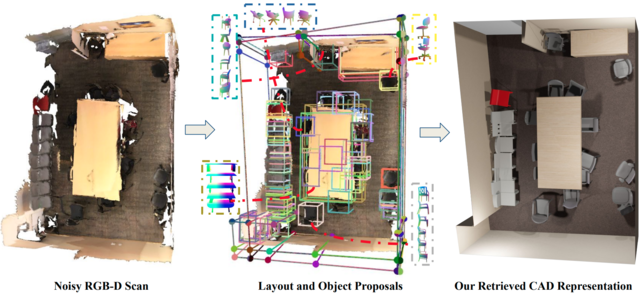

We explore how a general AI algorithm can be used for 3D scene understanding to reduce the need for training data. More exactly, we propose a modification of the Monte Carlo Tree Search (MCTS) algorithm to retrieve objects and room layouts from noisy RGB-D scans. While MCTS was developed as a game-playing algorithm, we show it can also be used for complex perception problems. Our adapted MCTS algorithm has few easy-to-tune hyperparameters and can optimise general losses. We use it to optimise the posterior probability of objects and room layout hypotheses given the RGB-D data. This results in an analysis-by-synthesis approach that explores the solution space by rendering the current solution and comparing it to the RGB-D observations. To perform this exploration even more efficiently, we propose simple changes to the standard MCTS' tree construction and exploration policy. We demonstrate our approach on the ScanNet dataset. Our method often retrieves configurations that are better than some manual annotations, especially on layouts.

Technical Description

Given a scene consisting of registered RGB-D images, we first extract proposals that potentially belong to the furniture and structural components of the scene. Our MCTS based approach, we call it Monte Carlo Scene Search (MCSS), efficiently finds the optimal set of proposals that best fits the given scene. For this, we rely on a scoring function that measures that enforces semantic and geometric consistencies.

Video

Resources

- Code: https://github.com/montescene

- Synthetic object scan point clouds dataset used for proposal generation available here

- Relabelled SceneCAD dataset with more details available here

- Arxiv paper and supplementary: https://arxiv.org/abs/2103.07969

Evaluations

- Evaluation results for room layout estimation on our refined annotations from the SceneCAD dataset:

| precision | recall | IOU | |

| SceneCAD GT | 91.2 | 80.4 | 75.0 |

| MCSS (Ours) | 85.5 | 86.1 | 75.8 |

- Evaluation of object detection and pose estimation accuracy on the Scan2CAD validation scenes using precision and recall metrics:

| IOU Th. | chair | sofa | table | bed | |||||

| precision | recall | precision | recall | precision | recall | precision | recall | ||

| All Proposals | 0.50 | 0.06 | 0.92 | 0.05 | 0.93 | 0.05 | 0.68 | 0.16 | 0.93 |

| 0.75 | 0.04 | 0.59 | 0.04 | 0.56 | 0.03 | 0.46 | 0.08 | 0.48 | |

| Baseline | 0.50 | 0.70 | 0.85 | 0.77 | 0.80 | 0.66 | 0.56 | 0.74 | 0.74 |

| 0.75 | 0.19 | 0.29 | 0.31 | 0.39 | 0.24 | 0.30 | 0.30 | 0.41 | |

| MCSS (Ours) | 0.50 | 0.75 | 0.87 | 0.79 | 0.93 | 0.65 | 0.59 | 0.86 | 0.86 |

| 0.75 | 0.27 | 0.32 | 0.42 | 0.42 | 0.34 | 0.30 | 0.41 | 0.44 | |

- Evaluation of object alignment accuracy on Scan2CAD benchmark:

| Method | Obj-Obj Relationship modelling | Chair | Sofa | Bed |

| Baseline | No | 42.02 | 27.70 | 18.52 |

| Scan2CAD | No | 44.26 | 30.66 | 30.11 |

| E2E | No | 73.04 | 76.92 | 48.15 |

| SceneCAD | Yes | 81.26 | 82.86 | 45.60 |

| MCSS (Ours) | No | 74.32 | 78.70 | 24.28 |

- One-Way Chamfer distance between the MCSS retrieved models and Scan point-cloud of ScanNet Validation set:

| Method | Chair | Sofa | Table | Bed |

| Baseline | 2.6 | 11.0 | 14.2 | 26.3 |

| MCSS (Ours) | 2.3 | 7.8 | 13.2 | 18.7 |

| Scan2CAD annotations | 2.0 | 5.2 | 5.6 | 9.3 |