About

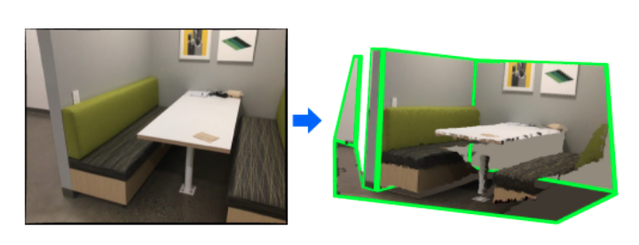

We present a novel method to reconstruct the 3D layout of a room—walls, floors, ceilings—from a single perspective view, even for the case of general configurations. This input view can consist of a color image only, but considering a depth map will result in a more accurate reconstruction. Our approach is based on solving a constrained discrete optimization problem, which selects the polygons which are part of the layout from a large set of potential polygons. In order to deal with occlusions between components of the layout, which is a problem ignored by previous works, we introduce an analysis-by-synthesis method to iteratively refine the 3D layout estimate.

This work was supported by the Christian Doppler Laboratory for Semantic 3D Computer Vision, funded in part by Qualcomm Inc.

Technical Description

Given a real image of a room, we first extract depth, semantic segmantion and planar segments in the image. We use this information to infer 3D parameters of planes belonging to the layout components in the image. By intersecting the planes we obtain a list of candidate polygons for each of the layout components. We proceed to find the optimal set of polygons for the layout by solving a discrete optimization problem that reasons both about 2D and 3D quality of the estimated layout. In case of occlusions between layout components, we introduce a Render-and-Compare approach that detects inconsistencies and iteratively refines the layout.

ScanNet-Layout Dataset

In addition, we introduce the ScanNet-Layout dataset for benchmarking general 3D room layout estimation from single view. The benchmark includes 293 views from the ScanNet dataset that span different layout settings, are equally distributed to represent both cuboid and general room layouts, challenging views that are neglected in existing room layout datasets, and in some cases we include similar viewpoints to evaluate effects of noise (e.g. motion blur). Our benchmark supports evaluation metrics both in 2D and 3D. If you are interested in evaluating your approach on our benchmark, please follow this link.

Code Release

The implementation of our approach is publicly available. If you are interested into trying out our approach, please follow this link to our github repository.

Related Student Projects

3D Room Layout Inpainting