Hybrid One-Shot 3D Hand Pose Estimation

This page provides downloads for our BMVC'15 paper Hybrid One-Shot 3D Hand Pose Estimation by Exploiting Uncertainties.

|

|

|

| (a) | (b) | (c) |

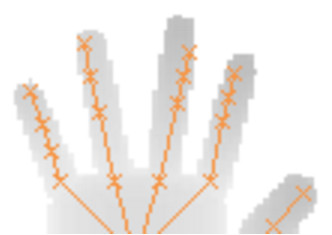

A learned joint regressor might fail to recover the pose of a hand due to ambiguities or lack of training data (a). We make use of the inherent uncertainty of a regressor by enforcing it to generate multiple proposals (b). The crosses show the top three proposals for the proximal interphalangeal joint of the ring finger for which the corresponding ground truth position is drawn in green. The marker size of the proposals corresponds to degree of confidence. Our subsequent model-based optimisation procedure exploits these proposals to estimate the true pose (c).

Download

We used synthetically generated data to evaluate the influence of major processing steps and compare to the approach from FORTH. The datasets have been acquired at FORTH.

- Download the synthetic training set (~25 MB)

- Download the synthetic test sequence (TrackSeq) (~4 MB)

The packages contain our results (for TrackSeq) as well as further descriptions and example scripts illustrating usage of the data.

Our results for the ICVL and NYU datasets can be downloaded here (~19 MB) or from the github repository.

Material

The BMVC'15 paper, extended abstract and slides can be downloaded here:

- Paper (PDF)

- Extended Abstract (PDF)

- Slides as PDF

Citation

If you use this dataset or results, please cite our paper:

In Proc. British Machine Vision Conference (BMVC), 2015

BibTeX reference for convenience:

author = {Georg Poier and Konstantinos Roditakis and Samuel Schulter and Damien Michel and Horst Bischof and Antonis A. Argyros},

title = {{Hybrid One-Shot 3D Hand Pose Estimation by Exploiting Uncertainties}},

booktitle = {{Proc. British Machine Vision Conference (BMVC)}},

year = {2015}

}

- Team

- Research

- Open Student Projects

- Publications

- Completed Theses

- Downloads