Annotated Facial Landmarks in the Wild (AFLW)

Annotated Facial Landmarks in the Wild (AFLW) provides a large-scale collection of annotated face images gathered from the web, exhibiting a large variety in appearance (e.g., pose, expression, ethnicity, age, gender) as well as general imaging and environmental conditions. In total about 25k faces are annotated with up to 21 landmarks per image.

Database

Annotated Facial Landmarks in the Wild (AFLW) provides a large-scale collection of annotated face images gathered from Flickr, exhibiting a large variety in appearance (e.g., pose, expression, ethnicity, age, gender) as well as general imaging and environmental conditions. In total about 25k faces are annotated with up to 21 landmarks per image. A short comparison to other important face databases with annotated landmarks is provided here:

| Database | # landmarked imgs | # landmarks | # subjects | image size | image color | Ref. |

| Caltech 10,000 Web Faces | 10,524 | - | - | - | color | [1] |

| CMU/VASC Frontal | 734 | 6 | - | - | grayscale | [10] |

| CMU/VASC Profile | 590 | 6 to 9 | - | - | grayscale | [11] |

| IMM | 240 | 58 | 40 | 640x480 | color/grayscale | [9] |

| MUG | 401 | 80 | 26 | 896x896 | color | [8] |

| AR Purdue | 508 | 22 | 116 | 768x576 | color | [5] |

| BioID | 1,521 | 20 | 23 | 384x286 | grayscale | [3] |

| XM2VTS | 2,360 | 68 | 295 | 720x576 | color | [6] |

| BUHMAP-DB | 2,880 | 52 | 4 | 640x480 | color | [2] |

| MUCT | 3,755 | 76 | 276 | 480x640 | color | [7] |

| PUT | 9,971 | 30 | 100 | 2048x1536 | color | [4] |

| AFLW | 25,993 | 21 | - | - | color |

Description

The motivation for the AFLW database is the need for a large-scale, multi-view, real-world face database with annotated facial features. We gathered the images on Flickr using a wide range of face relevant tags (e.g., face, mugshot, profile face). The downloaded set of images was manually scanned for images containing faces. The key data and most important properties of the database are:

- The database contains about 25k annotated faces in real-world images. Of these faces 59% are tagged as female, 41% are tagged as male (updated); some images contain multiple faces. No rescaling or cropping has been performed. Most of the images are color although some of them gray-scale.

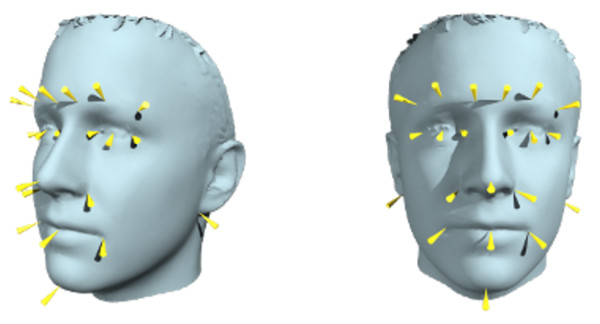

- In total AFLW contains roughly 380k manually annotated facial landmarks of a 21 point markup. The facial landmarks are annotated upon visibility. So no annotation is present if a facial landmark, e.g., left ear lobe, is not visible.

- A wide range of natural face poses is captured The database is not limited to frontal or near frontal faces.

- Additional to the landmark annotation the database provides face rectangles and ellipses. The ellipses are compatible with the FDDB protocol. Further, we include the coarse head pose obtained by fitting a mean 3D face with the POSIT algorithm.

- A rich set of tools to work with the annotations is provided, e.g., a database backend that enables to import other face collections and annotation types. Also a graphical user interface is provided that enables to view and manipulate the annotations.

Due to the nature of the database and the comprehensive annotation we think it is well suited to train and test algorithms for

- facial feature localization

- multi-view face detection

- coarse head pose estimation.

License Agreement

By downloading the database you agree to the following restrictions:

- The AFLW database is available for non-commercial research purposes only.

- The AFLW database includes images obtained from FlickR which are not property of Graz University of Technology. Graz University of Technology is not responsible for the content nor the meaning of these images. Any use of the images must be negociated with the respective picture owners, according to the Yahoo terms of use. In particular, you agree not to reproduce, duplicate, copy, sell, trade, resell or exploit for any commercial purposes, any portion of the images and any portion of derived data.

- You agree not to further copy, publish or distribute any portion of the AFLW database. Except, for internal use at a single site within the same organization it is allowed to make copies of the database.

- All submitted papers or any publicly available text using the AFLW database must cite our paper

- The organization represented by you will be listed as users of the AFLW database.

Download Instructions

If you agree with the terms of the license agreement, you can request access to AFLW via this request form.

Additional Notes

- Unfortunately some annotations still lack in quality. As it is by now not planned to perform benchmarks on AFLW we are are planning to constantly improve the quality of the annotations in the next releases.

- The provided software and code is ment as usage example to AFLW. There is no detailed documentation or plenty of example code.

- We are happy for feedback and discussion, if you have comments just drop us a few lines.

Citation

If you use AFLW, please cite our paper:

In Proc. First IEEE International Workshop on Benchmarking Facial Image Analysis Technologies, 2011

BibTeX reference for convenience:

author = {Martin Koestinger, Paul Wohlhart, Peter M. Roth and Horst Bischof},

title = {{Annotated Facial Landmarks in the Wild: A Large-scale, Real-world Database for Facial Landmark Localization}},

booktitle = {{Proc. First IEEE International Workshop on Benchmarking Facial Image Analysis Technologies}},

year = {2011}

}

Acknowledgements

The work was supported by the FFG projects MDL (818800) and SECRET (821690) under the Austrian Security Research Programme KIRAS. We want to thank all people who have been involved in the annotation process, especially, the interns at the institute and the colleagues from the Documentation Center of the National Defense Academy of Austria.

References

[1]A. Angelova, Y. Abu-Mostafam, and P. Perona. Pruning training sets for learning of object categories. In Proc. CVPR, 2005.

[2]O. Aran, I. Ari, M. A. Guvensan, H. Haberdar, Z. Kurt, H. I. Turkmen, A. Uyar, and L. Akarun. A database of non-manual signs in turkish sign language. In Proc. Signal Processing and Communications Applications, 2007.

[3]O. Jesorsky, K. J. Kirchberg, and R. W. Frischholz. Robust face detection using the Hausdorff distance. In Proc. Audio and Video-based Biometric Person Authentication, 2001.

[4]S. A. Kasiński A., Florek A. The PUT face database. Image Processing & Communications, pages 59–64, 2008.

[5]A. Martinez and R. Benavente. The AR face database. Technical Report 24, Computer Vision Center, University of Barcelona, 1998.

[6]K. Messer, J. Matas, J. Kittler, and K. Jonsson. XM2VTSDB: The extended M2VTS database. In Proc. Audio and Video-based Biometric Person Authentication, 1999.

[7]S. Milborrow, J. Morkel, and F. Nicolls. The MUCT Landmarked Face Database. In Proc. Pattern Recognition Association of South Africa, 2010.

[8]C. P. N. Aifanti and A. Delopoulos. The MUG facial expression database. In Proc. Workshop on Image Analysis for Multimedia Interactive Services, 2005.

[9]M. M. Nordstrom, M. Larsen, J. Sierakowski, and M. B. Stegmann. The IMM face database - an annotated dataset of 240 face images. Technical report, Informatics and Mathematical Modelling, Technical University of Denmark, DTU, 2004.

[10]H. Rowley, S. Baluja, and T. Kanade. Rotation invariant neural network-based face detection. Technical Report CMU-CS-97-201, Computer Science Department, Carnegie Mellon University (CMU), 1997.

[11]H. Schneiderman and T. Kanade. A statistical model for 3D object detection applied to faces and cars. In Proc. CVPR, 2000.

- Team

- Research

- Open Student Projects

- Publications

- Completed Theses

- Downloads